February, 2026.

17 minutes to read and 28 to listen.

I will begin by quoting the new essay ”the Adolescence of Technology” from January 2026 by Dario Amodei, CEO and founder of Anthropic, that discusses the imminent colossal threats of AI to mankind.

”Humanity is about to be handed almost unimaginable power, and it is deeply unclear whether our social, political, and technological systems possess the maturity to wield it.”

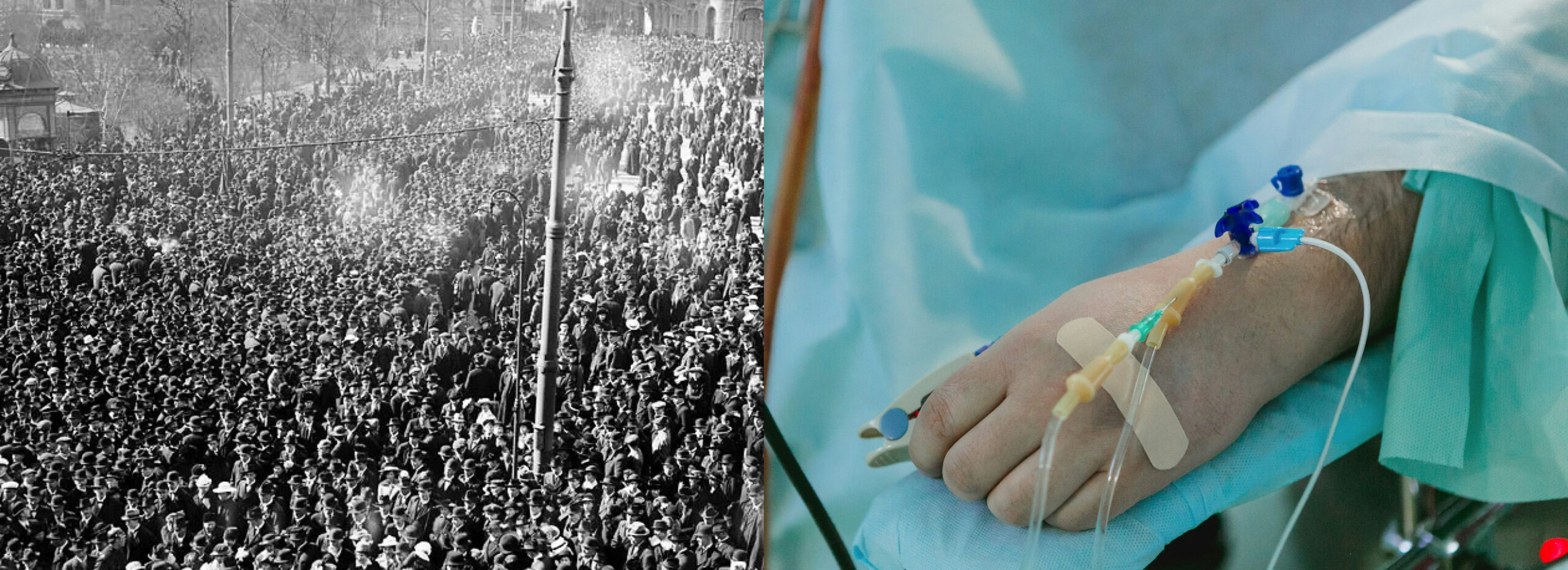

Those who really know about the progress of AI right now, they say we will all very soon have geniuses in every cognitive field in our phones. Also in biotechnology. Amodei continues: ”I am concerned that a genius in everyone’s pocket could remove that barrier, essentially making everyone a PhD virologist who can be walked through the process of designing, synthesizing, and releasing a biological weapon step-by-step.”

He concludes: ”I am worried there are potentially a large number of such people out there, and that if they have access to an easy way to kill millions of people, sooner or later one of them will do it. Additionally, those who do have expertise may be enabled to commit even larger-scale destruction than they could before.”

Dario Amodei shares this concern with others of the most knowledgeable insiders in the US: the AI researchers and the other CEOs of the leading AI companies.

AI progress is instant right now. It ought to make the people with power to change our destiny listen and act. They are only two: the US and China, who are developing AGI and then Superintelligence.

Tragically, they do not do so. It is as if it does not exist as a threat.

The speed of AI development lately is shocking, and many of the most knowledgeable in AI in the US are panicking right now. To know as an outsider where we are you have to listen to what the insiders in the US are saying at this moment. Thankfully they do podcast interviews with sharp podcast journalists.

Dario Amodei has participated in several such interviews in January of 2026, stressing that we might enter the stage of superhuman AI already in 2027. He is, to say the least, alarmed by the consequences. More so than others. And he probably knows more than anybody else what potential AI is about to have.

AI company leaders and other leading AI experts, who know what is about to happen, are more than scared. Let us call them the US AI pioneers.

Most other important AI pioneers in the know now believe AGI is here very soon. Mustafa Suyleman, co-founder of Google DeepMind and presently in charge of Microsoft AI, also thinks so. He was interviewed by Financial Times in February, 2026. He thinks most white-collar jobs will be automated by then. But how many?

Mustafa Suyleman: ”I think we are going to have a human level performance on most, if not all, professional tasks. So white-collar work, where you sit by a computer, being a lawyer, accountant, project manager or marketing person.”

And Suyleman’s most important statement.

”Most of those tasks will be fully automated by an AI within the next 12 to 18 months.”

This is the opinion of AI researchers too.

Though, many of us are sensing it and experiencing the quick progress of our intelligent chatbots. Some of us might even vibe code our own rather complex apps.

It is obvious, people with power, those who could influence destiny, are not listening, not reacting the slightest. No nation, national body or powerful global organisation is even trying to help avoid the possible tragedy of unsafe superhuman AI.

We are most likely pushing ourselves off a cliff.

Now, AI is starting to become extremely intelligent and able to solve more and more complicated and potentially dangerous problems, as Dario Amodei writes. Most with insight thought this rapid development would arrive in a few decades a few years ago, before the the first Large language model, GPT-1, was born in 2018. These Large language models (LLMs) are the foundation of AI and they run chatbots like ChatGPT. It was simple, did rudimentary things, but heralded in the Age of AI.

Below, I have collected a few interviews conducted in January, 2026, with four of the most knowledgeable persons in AI. Amongst them: Dario Amodei.

Donald Trump has decided that there will not be any safety measures or guardrails on AI development at all. Trump’s opinion is that if the US does not win the race to AGI and then Superintelligence, China will become the new superpower, and the US forever weak.

The hypothesis is that the country that wins that race will forever have an explosive advantage in AI that no other country can catch up with.

It becomes clear in a clip (at the beginning of 4:40) with Trump from an NBC interview from February, 2026. Next presidential election is in November 2028. And Dario Amodei predicts AGI perhaps as soon as 2027.

It will be great for jobs, the militarily and medical innovations, Trump says. Though, there will probably be some bad things too, he says, without mentioning them. It sounds trivial. It is apparent that he does not know anything about the potential of AI.

Who knows this better than Dario Amodei?

Mike Krieger, Anthropic’s Labs chief, revealed in February of 2026 that Anthropic’s internal development teams now use Claude AI to write nearly 100 percent of code for the company’s products. Its popular Claude Cowork was developed entirely with Claude AI. In 1.5 weeks! Claude code has begun to improve its own code, and that accelerating self-improving capacity is what will take us to superhuman AI.

The best coders in the world use it. It is believed by a large part of US AI pioneers that Claude code will help Anthropic reach AGI and Superintelligence first.

And hackers are already using it for criminal purposes, like ransomware. It made it possible for one person, without much knowledge about AI tools, to blackmail companies by stealing valuable information. One person did it! Claude code hacked its way in, found the information and even wrote the blackmail letters. It used to require a team of highly skilled hackers.

Anthropic believes hacking will only get worse, unless there are safety breakthroughs. Cyberattacks destroying financial systems, like banks, are major concerns, according to US AI pioneers. Sweden is cash-free. What would happen if it is not possible to pay for food with your debit card?

We are somewhere in the second act of a sci-fi horror movie. That appears to be Dario Amodei’s clear opinion. We are about to enter the third and fight for our existence.

The person responsible for safety research at Anthropic quit in February 2026 — he has warned of AI being used for bioterrorism for quite som time.

Hopefully there is one way to avoid this tragedy.

China and the US must sit down together and reach an agreement to pause development of an AI that is quickly getting more powerful and dangerous by the day. Stop it until the leading AI companies have developed adequate safety measures. This is Dario Amodei and other pioneers’ position.

Leaders of the few competing AI companies that are creating this alien superintelligent species know what they are doing. But only Dario Amodei has the courage to really speak out about it. Anthropic was started by a group of mostly AI researchers that wanted to build safe AI. They test their models rigorously, write papers about it and inform the public.

Ignorance mixed with panic appears to be one major ingredient that is causing action paralysis amongst the influential establishment with this potential power. Kind of like a deer that freezes before being run over. Or like the protagonists in the film Melancholia that more or less accept their destiny when the meteor is closing in to annihilate earth.

Most of us know about and feel the risks. So why are they not at least present in the agenda-setting European political and mass media discussions in an important way by now? (However, relatively few seem to know how critical the situation is.)

So AGI stands for Artificial General Intelligence — when AI can do any cognitive task as well as a top human performer of that task. All these different cognitive capabilities will exist in what is an artificiell brain, just like our biological one.

This is one explanatory hypothesis. This means AI brains will be able to do everything the best human ones can do with a computer in any field. All this intelligence in one AI. And at a minimal fraction of the cost.

This could happen within 2- 3 years, say many of the the US AI pioneers. Dario Amodei, as mentioned, believes superhuman AI will come before that.

Superintelligence, the pinnacle, will be reached when AI can do anything a cognitive worker can do but so much better than the top performer in that area. That is when we all will have these geniuses in any cognitive field in our pockets. With self-improving AI, Superintelligence will keep on getting smarter. Is there even a limit? Many US AI pioneers think we will get there within ten years.

Threats are so many and different. A lethal virus created by en evil terrorist group or individual with interest in Chemistry could cause catastrophe or even doom. Foremost experts on biotechnology acknowledge this growing risk too. I have written an extensive article on how AI increases that risk dramatically, based on information from, amongst others, biotechnology experts. The opinion is that superhuman AI will make it possible.

You can also listen to this article in English. It should be an eye-opener for those who are not updated on the existential risks with AI.

Dario Amodei fears it, as does Sam Altman, CEO and founder of OpenAI with ChatGPT, who does not talk much about risks in general because of AI. OpenAI is also a contender to reach AGI and Superintelligence first.

But Sam Altman does discuss his worries with an evermore intelligent AI helping a bad actor create a bioweapon that can wipe out huge numbers of humans.

At a panel discussion in February, 2026, Altman said (30 minutes): ”There are many ways AI can go wrong. In 2026, certainly one of them that we are quite nervous about is bio. The models are quite good at bio. And right now our and the world’s strategy is to restrict who gets access to them, and put a bunch of classifiers on them to not help people make novel pathogens.

And Sam Altman continues darkly: ”I do not think that is going to work for much longer. And the shift I think the world needs to make for AI security generally and biosecurity in particular is to move from one of like blocking to one of resilience.”

No one has a clue today how to make this come true.

Boaz Barak, professor in computer science at Harvard and a technology expert at OpenAI, posted a tweet in June of 2025:

”Some might think that biorisk is not real, and models only provide information that could be found via search. That may have been true in 2024 but is definitely not true today. Based on our evaluations and those of our experts, the risk is very real.”

At the beginning of 2026, the new Large language models — Google Gemini 3 Deep Think, Anthropic’s Opus 4.6 and GPT 5.3 Codex — are way more advanced than those that were frontier models when Boaz Barak made that remark.

Is it really worth this great existential risk to maybe find cures for different cancers up until now deadly with superintelligent AI? Or developing car batteries that are smaller and more efficient? Maybe even energy sources that are free and limitless? Or exploring the universe? And so on.

When, moreover, maybe also most jobs will disappear in a near future — and these existential risks.

What would an unemployment rate of, let us say, 30 to 40 percent within 10 to 15 years lead to? Individually, to families, to society and politically? What is the difference if it happens in 20 to 25 years?

Geoffrey Hinton, the researcher who was awarded the Nobel Prize in Physics in 2024 for the development of neural networks, the actual foundation of AI, says that the job that will remain the longest is plumber. We must accept the potential as time goes by.

To those who believe there will be many new jobs with superintelligent AI, he says: ”Which jobs would that be?” If AI with time can do almost all cognitive and physical jobs, with robots. These new jobs would have to be, to say the least, many. People appear to think AI will not evolve. They laugh at the robots falling over and ChatGPT hallucinating. We have to realise we are in AI Stone Age. The beach is still peaceful, but that wave is about to hit us.

Therefore Geoffrey Hinton, as most of the other pioneers, is urgently calling for politicians to enforce Universal Basic Income, UBI. This would provide a basic income for all those affected by unemployment because of AI. It would help prevent human tragedies, people could and hopefully would remain calm, it might help society avoid severe unrest.

Roman V. Yampolskiy, professor of Computer Science and Engineering at University of Louisville, is another of the world’s most renowned AI researchers, especially on safe AI, who is convinced of UBI. Pioneers share this opinion. Elon Musk and Demis Hassabis are two more of them.

Yampolskiy says: ”It seems like the only way for governments to prevent revolutions and civil unrest.”

Pioneers say AI will start causing increasing mass unemployment from 2027 and on.

A society that wants to remain stable must prepare. Not one country is doing so.

Dario Amodei explained in an interview from May 2025 that unemployment in the US could reach 20 percent within five years. Countries with a higher unemployment rate right now than the US ought to be hit harder.

Amodei also believes AI could eliminate half of all entry- level jobs within five years. This bleak future includes new graduates of law, finance, economics, and such. Positions young people who leave university begin with, as a way into their professional fields.

You can watch desperate selfie videos on social media where US graduates from university talk about applying to hundreds of jobs, without getting even one interview. Many of them with advanced exams in computer science and software engineering. Recently some worked for Google, Meta and similar companies.

Other dangers, according to Dario Amodei, are that countries with access to superhuman AI (merely China and the US are contenders) will become so much more powerful economically and militarily than those without. Europe does not have one real AI company. It is as if this miracle did not happen on our continent.

We can only hope that the US will share their best AI intelligence with us so that our industries can compete with the US and Chinese companies. If we lose our competitive edge because they have innovative superbrains, what will happen to us?

The risk is huge that we will become existentially and economically dependent on the US AI companies. The US could treat Europe anyway it wants. And we would have to accept it and bow to our masters.

Demis Hassabis, founder and CEO of Google DeepMind, probably Anthropic’s number one contender for AGI and Superintelligence, estimates that AI’s effects on individuals and society will be extreme and in ways we cannot imagine. Hassabis thinks we will have AGI one or two years after 2030 — his definition entails that AGI can also ask new scientific questions on its own and not only answer human questions — but his analysis reflects Amodei’s.

Demis Hassabis in an interview at the World Economic Forum:

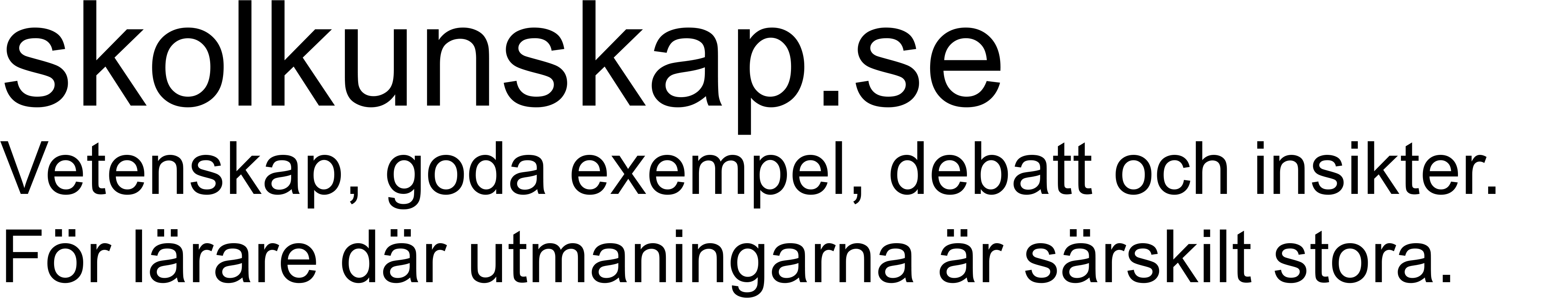

”It is going to be an age of disruption, just like the industrial revolution was. Maybe 10 X of that, which is kind of unbelievable to think about, and maybe 10 times faster. So I usually describe it is going to be 10 times bigger and 10 times faster than the industrial revolution. 100 times bigger.”

The text continues below.

In this interview, 13:50 into it, Dario Amodei says: ”It is hard enough for the (AI) companies and the US to handle this crazy commercial race. But in theory, we could pass laws that help rein the (AI) companies in. But it is almost impossible to do that if you have an authoritarian adversary who is out there building the technology almost as fast as we are. It creates a terrible dilemma.”

Geoffrey Hinton thinks Superintelligence could be a reality as soon as in only five years. Recently, he believed it would take decades. Other pioneers are shocked of how fast development is. Superintelligence is AGI on supersteroids.

Crucial observations by Dario Amodei and Demis Hassabis in two interviews below. They took place at the World Economic Forum in Davos around January 20, 2026. One is 32 minutes, the other is 30 minutes.

Hassabis says the AI companies cannot achieve adequate safety measures and rules by themselves. They, and the US and China, are in a race with each other where if one stops for a mere afternoon it might lose. That is the opinion of both competing countries and of these AI companies.

And how can they trust each other to abide by a treaty? It is not difficult to hide AI labs. A true prisoner’s dilemma if there ever was one.

The text continues below.

At 11:40 into the above video, Dario Amodei talks about the sci-fi film Contact from a book by Carl Sagan, where humans discover alien life. There is one scene where humans are preparing questions to ask the alien life forms. Amodei quotes them: ”How did you do it? How did you manage to get through this technological adolescence without destroying yourselves? How did you make it through?”

The text continues below.

At 11.40 Demis Hassabis focuses (above) in a solo interview on the urgency of pausing development of AI when we are getting close to AGI and Superintelligence. He has also, in other interviews, talked about the urgent need for leading AI companies, competing for AGI and Superintelligence, to develop common rules, guardrails, so that AI stays safe. He has said a country, organisation or such outside the US must take this responsibility. The US will and can not do it, the US believes it is in an existential race with China. In above interview, Hassabis says: ”International cooperation is a bit tricky at the moment.” And about the possibility of cooperation between the leading AI companies: ”I think a lot of it is about understanding what is at stake.”

Demis Hassabis has said Europe could fill that void of creating a platform for discussions between the two superpowers to stop development for a period when we are so close to AGI, since they are trapped in this existential race. So safe superhuman AI can be developed before that leap.

Hassabis has said that the leading AI companies are ready for cooperation on safety, that they would participate. Dario Amodei thinks it is absolutely necessary with a longer pause, until they know how to create safe AGI and Superintelligence.

Another revelatory interview (below) from World Economic Forum is with historian and philosopher Yuval Noah Harari (author of Sapiens and Nexus), and Max Tegmark, professor at Massachusetts Institute of Technology, MIT, with AI as his research area.

Tegmark dedicates his time to creating safe AI. He has spoken emotionally about his children maybe never experiencing being in a forest, never touching trees.

Harari is one of the greatest thinkers on the risks of AI. Tegmark is trying to make the world, especially those with power to change our destiny, aware. Hoping change can be reached through awareness. In its entirety, the below interview with them is 32 minutes.

It shows the acute threats in the beginning of 2026.

The text continues below.

In the January 2026 panel discussion, Yuval Noah Harari and Max Tegmark talk about the human, societal and existential risks with the critically dangerous AI that is arriving very soon. They are, to put it mildly, deeply worried. The new AI models are so potent. Harari: ”What are the implications for human psychology and society? We have no idea. We will know in 20 years. This is the biggest psychological and social experiment in history, and we are conducting it and no one has any idea what the consequences will be.”

People say: this is like the Y2K-bug scare, when everybody thought computers would stop working when we entered the year 2000 because of errors related to the formatting and storage of calendar data for dates in and after the year 2000.

But nothing happened. Perhaps because so much effort was put into securing the systems.

Politicians and liberal editorial writers say and write: look, there is no job elimination going on. This is just another technological innovation that will create tons of new jobs, with higher productivity and higher wages.

Hassabis and Amodei also say: well, not now, but permanent job destruction will begin in 2027. That is when companies will start having swarms of specialised cognitive AI agents that can do more and more cognitive jobs better and cheaper than most human experts in different fields, the AI pioneers say. That ability will grow increasingly more efficient. And AI can also do the new cognitive jobs that are created, where you work with a computer, they say.

Four new examples that are typical of what is happening — to show the speed and force of progress at the moment.

An advanced version of Google Gemini achieved the gold standard in the International Mathematical Olympiad in 2025. This is considered a pivotal moment in AI development.

Jaana Dogan, one of Google’s principal engineers, in a tweet on January 2, 2026:

”I’m not joking and this isn’t funny. We have been trying to build distributed agent orchestrators at Google since last year. There are various options, not everyone is aligned… I gave Claude Code a description of the problem, it generated what we built last year in an hour.”

David Kipping, professor of astronomy at Columbia university, explains in a 3-minute video on X from February 2026 how researchers use AI to push the frontier of scientific knowledge. It is already a necessity for cutting-edge research. And we are in infancy AI.

Matt Shumer, a software engineer and coder, wrote a long article on x that received millions of reads and thousands of comments. It was published on February 9 of 2026 and the background is the extremely powerful new AI models from Anthropic and OpenAI that were released shortly before. Matt Shumer writes:

“I am no longer needed for the actual technical work of my job. I describe what I want built, in plain English, and it just… appears. Not a rough draft I need to fix. The finished thing.”

And he continues: ”I tell the AI what I want, walk away from my computer for four hours, and come back to find the work done. Done well, done better than I would have done it myself, with no corrections needed. A couple of months ago, I was going back and forth with the AI, guiding it, making edits. Now I just describe the outcome and leave.”

And so it goes on and on. Already. To show the limitless potential of AI.

A common fear, as said, amongst the pioneers, is the risk of somebody creating its own designed lethal virus. All tools might already exist. AI will help with new simple recipes to follow. Unless superintelligent AI is 100 percent safe. It only takes one bad actor, probably skilled, to coax out one such recipe. Imagine hundreds of bad actors trying. Some terrorists that are extremely motivated and skilled. Only one of them needs to succeed.

AI people in Silicon Valley usually have a so-called p(doom), that is probability of doom or great catastrophe. Many of the US AI pioneers believe it to be above 50 percent.

And not few are convinced the US and China will only try to cooperate to enforce safe superhuman AI after there is a catastrophe of sufficient magnitude. Disaster must happen first. They believe it can require tens of thousands or more humans dying in bioterrorism before the superpowers react.

Geoffrey Hinton has a p(doom) of higher than 50 percent with Superintelligence. This is what he said in 2024:

”I actually think the risk is more than 50 percent, the existential threat, but I don’t say that because there are other people who think it is less.”

What a statement from probably the sharpest mind when it comes to estimating Superintelligence’s devastating potential.

Elon Musk thinks p(doom) could be up to 30 percent. And so it goes on. Eliezer Yudkowsky, a founding father of research on safe AI, says doom is certain if Superintelligence is not stopped. (There is 0.5 percent chance we survive.) Otherwise, he thinks the data centers that fuel Superintelligence must be bombed as a last resort.

In a 2-minute clip from his Nobel Prize banquet speech, Geoffrey Hinton warns of the consequences of superhuman AI.

The text continues below.

Nick Boström, professor at Oxford university, and one of the most insightful minds when it comes to the risks of AI, is as deeply worried as Max Tegmark. Boström predicted superhuman AI by approximately now at the end of the 1990s. The world has had time to prepare.

This is one global call to action with 300+ signatories, with the likes of Geoffrey Hinton, Yuval Noah Harari, Yoshua Bengio, Jennifer Doudna (Nobel Laureate in Chemistry for Crispr, another tool for creating DIY bioweapons), Stuart Russell, and many more. Many of them top AI researchers.

They all, including Demis Hassabis and Dario Amodei, express the need for a forum so that the US and China, with the ultimate and only power to change destiny, can meet and truly realise what can be lost. And act together to avoid it.

The US and Chinese leaders must know about the destructive human, societal and existential risks. But Donald Trump has said that no constraints are allowed on the US AI companies. This race is about the survival of the US as the greatest power in the world.

The US and Chinese leaders also have children and grandchildren. They ought to be motivated. Why are the godlike generals of the leading US AI companies not explaining the existential, human and societal risks to Donald Trump? Though, maybe they are, and he just does not listen.

It is so weird and tragic that no one — like the EU, why not Sweden, the United Nations or maybe the Norwegian Nobel Peace Prize Committee — outside the US is even trying to create a platform where China and the US can meet and discuss the risks, and hopefully create and introduce sufficient safety measures that can at least greatly mitigate the risks.

Dario Amodei says the supreme US AI companies need several years to develop safe superhuman AI. He wants a pause in AI development that lasts that long.

But the hurdle is, of course, if the opponents believe there is a binding ironclad agreement and pause and you still develop superhuman AI in secret. Then you win.

I will end by quoting another of Dario Amodei’s essays, ”Machines of Loving Grace”:

”I think it should be clear that this is a dangerous situation—a report from a competent national security official to a head of state would probably contain words like ‘the single most serious national security threat we’ve faced in a century, possibly ever.’”

Who knows this better than Amodei does? Right now.

Without swift preparations it can go, to say the least, badly.

Then we can say, if it still goes wrong:

At least, we tried.